Your basket is currently empty!

Can It Run Within Embedded Constraints? Lessons from Scaling Edge AI

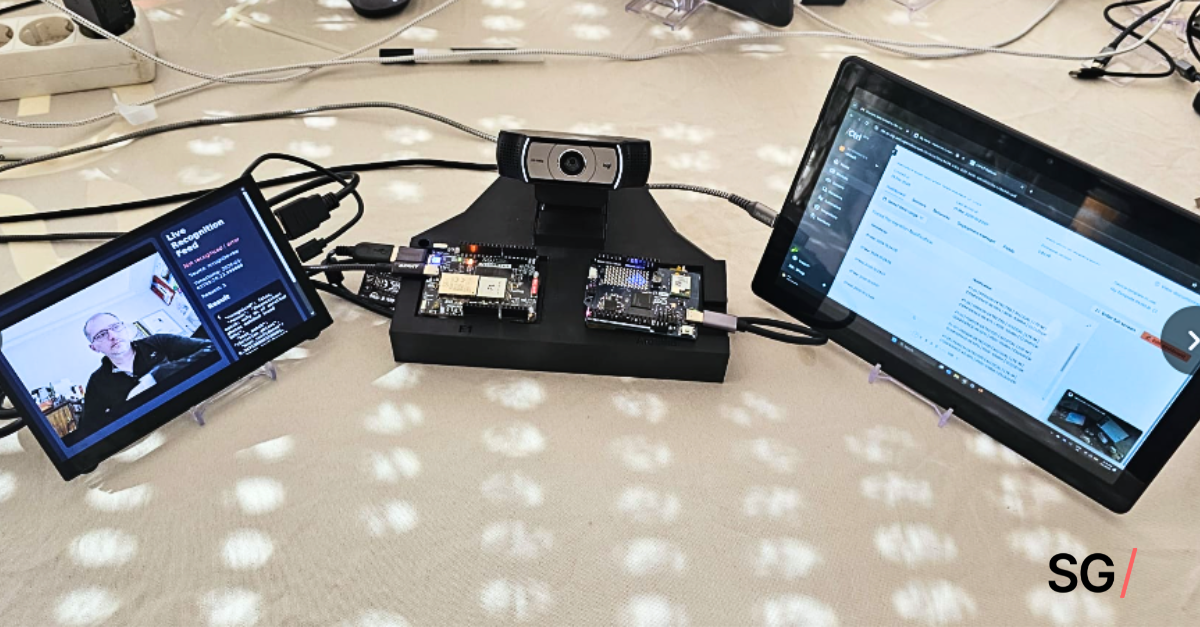

We asked the same question when we started our edge AI project:

“Can it run within embedded constraints?”

At first, we thought the answer was straightforward. The model seemed to fit. Memory usage was acceptable. Latency targets looked achievable. Power requirements were manageable.

But as we began moving toward deployment, where the system needed to do more than run inference in isolation, things got more complicated. The model was sharing resources with everything else on the device — data buffering, communication stacks, logging, retry logic — and together, they were changing the system’s behavior in ways we hadn’t expected.

It was at this point that we realized: if you seek clarity, we need to look at the question differently. Running within constraints wasn’t just about the model — it was about how the system behaves when everything is active all at once.

The Drift: How Subtle Failures Revealed Constraints

Instead of a spectacular, dramatic break, our system drifted. Tasks that ran consistently in the lab start lagging. Memory usage creeps up. Peripheral activity interferes with timing. Network retries overlap. Buffers fill faster than expected. Power draws fluctuate as devices wait for responses.

Each effect is minor on its own, and it wasn’t any one thing that we could pinpoint. Looking back, it was the interactions across compute, memory, power and connectivity — the constraints that only reveal themselves under realistic, continuous operation.

It was a lesson we learned the hard way.

What We Learned About Embedded Constraints (and What To Takeaway)

These are the embedded constraints you should be thinking about:

- Compute and memory limits are still the baseline and were rarely the problem by themselves. It was task contention and allocation spikes under load that caused unpredictable behavior.

- Power management issues often appeared only when combined with network variability or peripheral activity. The moment you move beyond a dev kit and into a battery-powered device, things change.

- Connectivity was where a lot of early assumptions went out the window. Unstable network, limited bandwidth, varying latency — if the system depends on timely data transfer, these factors quickly become limiting whether you planned for them or not.

And when scaled across dozens or hundreds of devices, these same interactions start compounding quickly.

If you take away one thing, it’s this: A model can fit within constraints and still fail as a system.

Don’t get so caught up in making the model run that you lose sight of how the system behaves.

In the next article, we’ll get into what changed once we started designing with these constraints in mind from the beginning.

Questions in the meantime? Send them to [email protected]. We’ll be happy to help!