Your basket is currently empty!

Building an Access Monitoring System with Edge AI (Part 3)

This article continues from Part 2: Designing with Constraints in Mind: What We Did Differently.

We’ve talked about the constraints and how we’ve designed around them. Let’s now put that architecture into practice, shall we?

The Application: Access Monitoring on the Edge

The use case: detect a person approaching an access point, validate the event locally, and transmit only the information required for monitoring and verification.

We chose this application because it forces several system requirements to exist simultaneously:

- low-latency response

- continuous uptime

- constrained bandwidth

- local decision making

- audit visibility

- graceful behavior during network interruptions

Traditional cloud-first architectures struggle here because they depend too heavily on round-trip communication. If image capture, inference and authorization all rely on cloud responsiveness, the system becomes increasingly sensitive to:

- latency variability

- unstable connectivity

- bandwidth limitations

- cloud-side processing delays

This introduces operational risk into something that should behave deterministically.

And we all know that access systems cannot afford to “wait for the internet.”

Building the System Around Embedded Constraints

For a proof-of-concept, we used a fisheye camera from an existing customer deployment — no fancy AI vision sensor for us (yet).

Call us crazy, but we wanted the constraints that could appear in real deployments:

- edge distortion

- inconsistent subject scaling

- wide-scene exposure balancing

- unnecessary scene information outside the primary detection zone

Most embedded systems are not designed in isolation. They inherit environmental limitations, existing infrastructure and deployment decisions that already exist long before the AI layer is introduced.

We weren’t looking to optimize a demo around a perfect sensor; we were looking to validate whether the architecture itself remained stable under imperfect conditions.

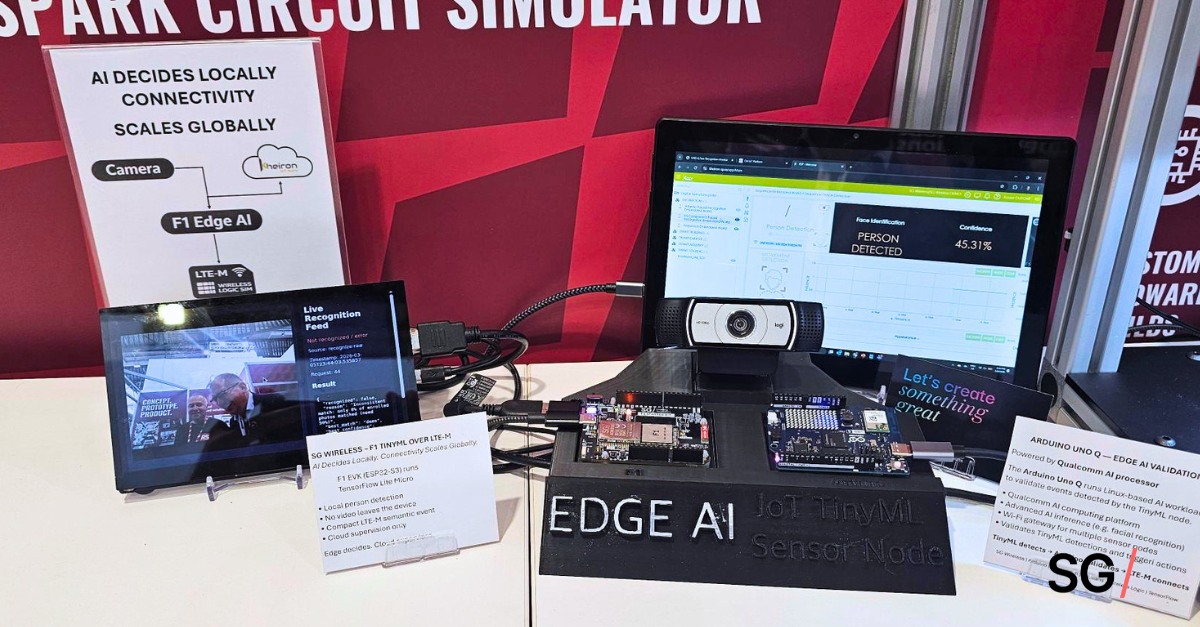

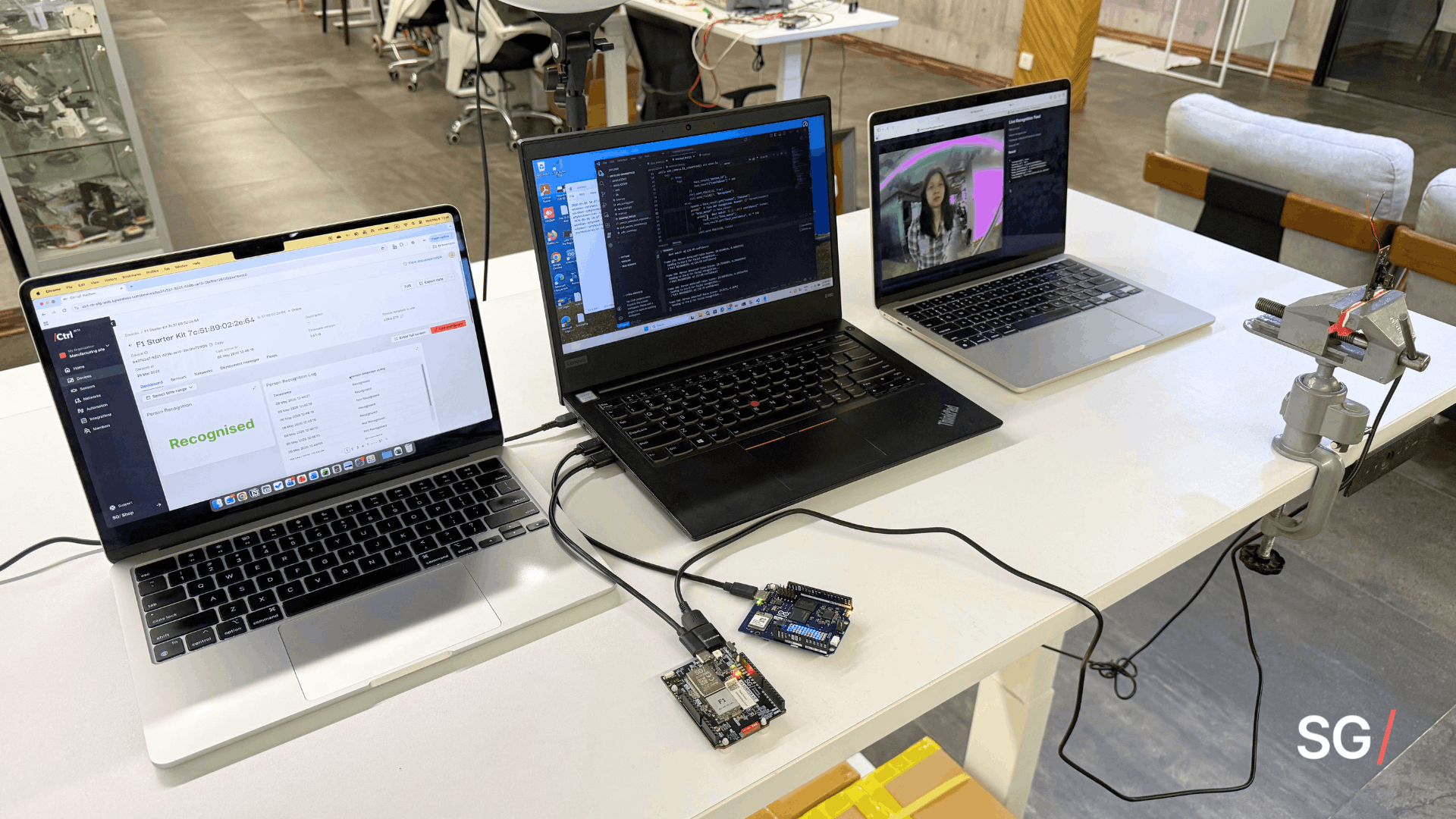

Layer 1: Edge Intelligence on the F1 Starter Kit

At the edge layer, the SG Wireless F1 Starter Kit handled local person detection using an INT8-quantized TensorFlow MobileNet SSD model optimized for embedded deployment. The challenge was ensuring inference remained stable while communication, buffering, retries and logging were all running simultaneously within embedded resource limits.

Key design decisions at the edge included:

- Local inference execution to avoid cloud-dependent response latency

- Event-based output instead of continuous image streaming

- Pre-processing and confidence filtering to reduce unnecessary transmission load

Layer 2: LTE-M / NB-IoT for Connectivity

Connectivity was handled through the Sequans GM02S Monarch 2 platform using LTE-M / NB-IoT. Earlier development exposed how quickly unstable connectivity can affect embedded system behavior, especially once retries, latency variation and buffering begin competing for resources alongside inference tasks.

To reduce this impact, we designed the communication layer around transmitting lightweight events rather than maintaining continuous cloud synchronization.

Key connectivity considerations included:

- Small event payloads to keep bandwidth usage predictable

- Local event buffering during temporary network interruptions

- Decoupling transmission timing from inference execution

Layer 3: Cloud Visibility Without Cloud Dependency

The cloud layer handled monitoring, event visibility and audit logging, but it was intentionally removed from the real-time operational path. The system continued running locally even during connectivity interruptions, with events queued and transmitted once communication recovered.

Separating cloud orchestration from device-side operation was one of the most important architectural decisions we made for the system.

Key cloud-layer considerations included:

- Cloud used for monitoring and orchestration, not real-time inference

- Buffered event synchronization during connectivity loss

- Centralized visibility without introducing operational dependency

What We Were Actually Validating

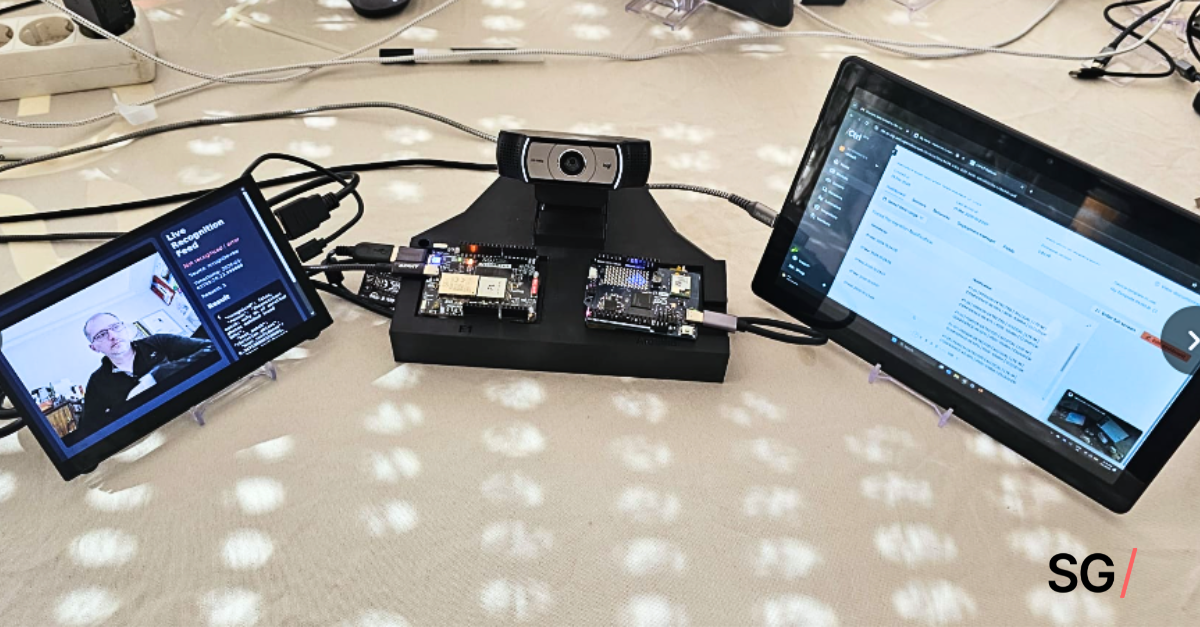

We agree — it’s not polished, but it helped us validate that the architecture could sustain simultaneous operations without destabilizing the rest of the system, across:

- local AI inference

- constrained cellular communication

- event buffering

- retry handling

- cloud synchronization

- long-duration embedded operation

Because that’s usually where embedded AI projects begin drifting from proof-of-concept into deployment problems, when surrounding system behavior was never designed around real operational constraints in the first place.

The Final Takeaway

What this project reinforced for us is that deployable edge AI systems are fundamentally architecture-first. Most failures aren’t due to inference — they appear in the interactions between subsystems:

- compute and communication contention

- memory spikes during retries

- unstable network behavior

- blocking cloud dependencies

- unmanaged buffering under load

These problems often remain invisible during isolated testing, and only become apparent once everything starts operating together continuously.

If you take away one thing, it’s this: Even the best model will be limited by how inference, connectivity and operational behavior coexist under real-world conditions.

Build on a foundation that ensures your model holds up under pressure.

If you missed it, read up on:

- Part 1 for lessons on scaling edge AI with embedded constraints, and

- Part 2 for what changed once we started designing with these constraints in mind from the beginning.

Questions or looking for more? Reach out to [email protected]. We’ll be happy to help!