Your basket is currently empty!

Designing with Constraints in Mind: What We Did Differently (Part 2)

This article continues from Part 1: Can It Run Within Embedded Constraints? Lessons from Scaling Edge AI.

When we started again, we didn’t begin with the model.

Instead, we started by running everything together under sustained load: inference, communication, buffering, retries and logging.

Our last system drifted because it wasn’t designed to handle these interactions happening at the same time. This time, we were going to account for this in the design itself.

And so we ramped up the pressure. We pushed so that:

- Memory spiked when retries overlapped with inference.

- Transmission interfered with timing.

- Buffers filled faster than expected when the network slowed down.

By pushing these issues to the surface, we confronted the underlying problem: our model couldn’t run if the system didn’t behave under imperfect conditions.

So instead, we looked at the architecture.

What the Architecture Actually Looks Like (and Why It Works)

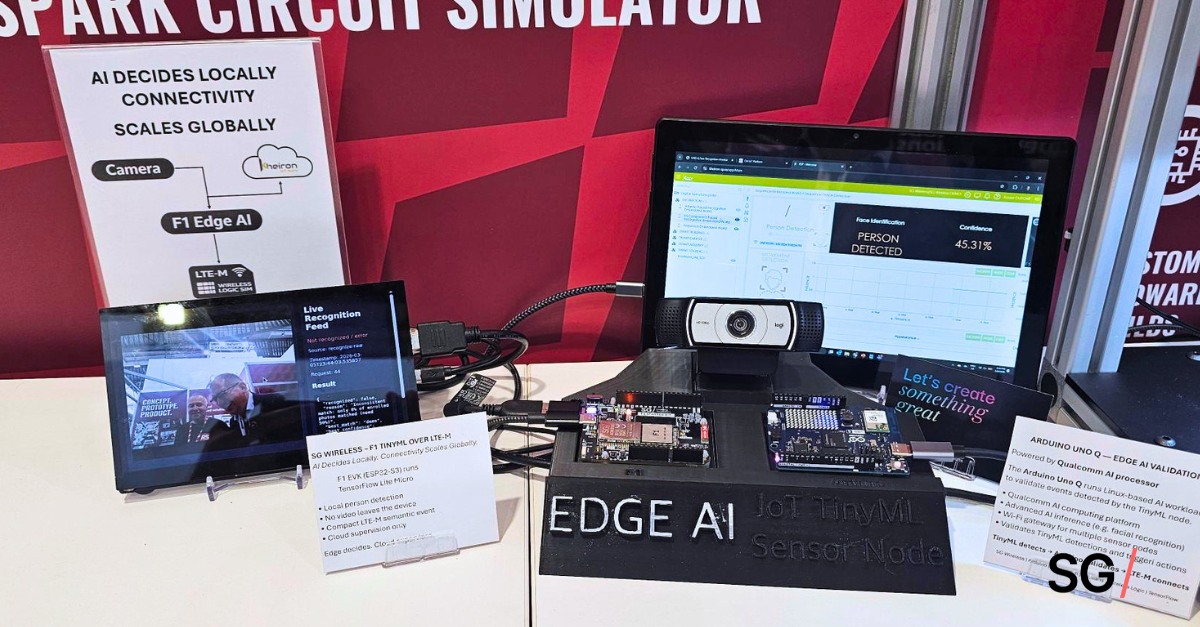

Instead of defaulting to a cloud-first flow, we restructured the system around three clear roles:

#1 The edge layer (device-side)

Inference runs locally on the device. We executed lightweight models (TensorFlow Lite in our case) within embedded constraints, with sub-second response times. The key is not just running inference, but filtering at source — turning continuous input into discrete events.

This removed our need to:

- buffer large volumes of raw data

- synchronize transmission with capture

- compete for memory during peak activity

#2 The connectivity layer (cellular IoT)

We used LTE-M / NB-IoT as a low-bandwidth, resilient backhaul rather than a high-throughput pipe.

That changed how communication behaves:

- events are transmitted, not streams

- retries are smaller and less disruptive

- messages can be buffered and flushed without blocking the system

It also forced us to be more conscious of our design decisions: what actually needs to be sent, and what can be derived locally?

#3 Cloud layer (non-critical path)

The cloud handles aggregation, monitoring, and orchestration, but it’s no longer part of the real-time loop.

If connectivity drops, inference continues and events are queued, so that the system doesn’t stall waiting for acknowledgment.

Separating this layer allowed us to stabilize the system even in less-than-ideal conditions.

What We Did Differently: Designing with Cellular From the Start

Treating connectivity as an afterthought was a big mistake in the first iteration:

- Bandwidth is limited, so data has to be filtered before it’s sent.

- Latency is variable, so real-time decisions can’t depend on a round trip to the cloud.

- Connectivity drops, so the system has to keep operating without it.

Designing with cellular from the beginning forced us to address issues that would have stayed hidden in controlled set-ups, issues that would require redesign and rework later down the line.

At the end of the day, we could say that the system behaved consistently when everything was running at the same time.

If you take away one thing, it’s this: A system designed around real network conditions are the ones that get deployed.

Never make assumptions when it comes to connectivity.

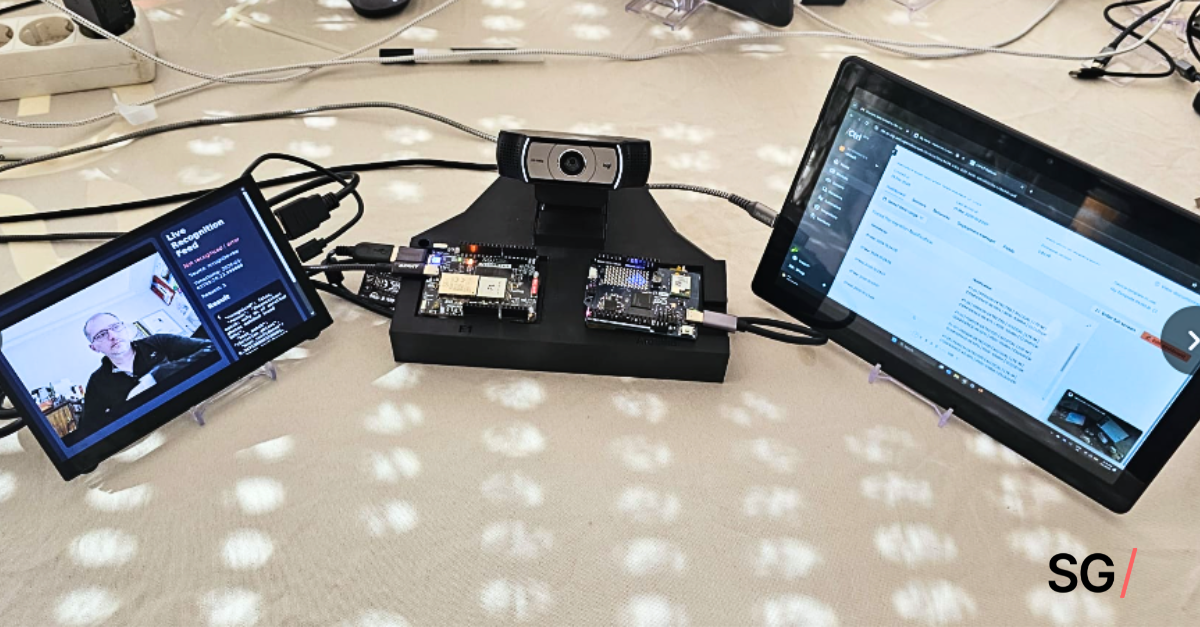

In the final article, we put this all into practice, breaking down exactly how we built our intelligent access monitoring solution on edge AI and cellular connectivity architecture with the SG Wireless F1 Starter Kit.

In the meantime, here’s Part 1 in case you missed it.

Questions? Send them to [email protected]. We’ll be happy to help!